Before we begin …

Operating System differences …

I’m writing this based on the two QNAP NAS devices (TS-869 Pro and TS-569L) I have, but the procedure for any MySQL setup will work the same. Just keep in mind that the MySQL versions should be the same (or at least have a very very very similar version number).

On a QNAP the entire MySQL business runs under Linux, but the settings are pretty much the same for Windows or MacOS X setups. You just might need to go find the MySQL config file since the path I discuss here are QNAP specific.

What we need …

- Two MySQL servers …

- … running the same MySQL versions (recommended),

- … having phpMyAdmin or MySQL WorkBench available to access each server (optional),

- … and/or having some kind of shell access to either server (direct, SSH, Telnet, etc).

Assumptions …

For the convenience of this article we will define the following (replace with the IP Addresses applicable for your setup):

- Master (the Source), with IP address 192.168.1.100

- Slave (Target/Copy), with IP address 192.168.1.200

Other assumptions, that are not required:

- Both MySQL Servers run under Linux (QNAP)

- Both MySQL Servers run the same MySQL version (default QNAP version)

Methods for Automatic MySQL backup

Snapshot Dumps

Backing up MySQL data can be done with the usual dump option, which can dump all the data as a big pile of SQL statements which at a later time can be executed to populate a MySQL setup. The big downside: it’s just a snapshot, so you’ll need to run that kind of dump frequently (cron job for example) to not loose too much data changes in between. So also have to setup a Cron job to run a script at a given intervals.

For those who have worked with these kind of dump of large databases: export and import are relatively slow, produces often big files, and you can run into unexpected errors and time-outs (phpMyAdmin).

Not really an option I’d like to use since I’m looking for realtime backups …

Creating such dumps is not useless, so if you’d like to read more about this method, read our Article on How to Export/Import MySQL Databases.

Replication

MySQL has replication option build in, which can be used to replicate data realtime-ish (it’s not really real-time) to another server, which can be used for example when your company has multiple sites and you want to use the data of the main office elsewhere.

The simplified way of looking at this:

Each time something changes on the Master Database, the appropriate SQL statements will be saved in a so called “binary” log. As soon as a Slave connects, the Slave will ask for these statements and will execute them at it’s own pace. Which means: the slave doesn’t need to be connected 100% of the time, which is great for when a connection gets interrupted or when connections are just sporadic …

Awesome! Just what we need.

Nomenclature, Operating System and Versions …

Master

The “Master” is the main source of your data, you main database server, or which ever you want to call it.

Slave

The “Slave” is the database server that holds to copy of the data from the “Master”.

Versions

Master and Slave do not have to be running on the same Operating System, but it’s recommended to have the same MySQL version running on both Master and Slave. The MySQL version often can be of the same “main” version, for example both MySQL servers are running a 5.x version. You’ll have to test and see if it works though, since differences between say 5.0 and 5.7 could be significant for replication. See the MySQL manual for more info on version compatibility.

Setup for Automatic MySQL Backup through Replication

Before we can begin, you’ll of course need to install and enable MySQL – which is pretty straight forward on a QNAP.

Go to “Control Panel” in the QNAP Web-interface. Under “Applications” you’ll find “MySQL Server“.

Check “Enable MySQL Server“. If you need remote access, ie. from a different computer, for example your PC or a XBMC box: check “Enable TCP/IP networking” as well (for example when you use MySQL WorkBench or any other SQL Management application that does not reside on your server).

Finally click “Apply“.

Tip : On this page you’ll also find a link to installing “phpMyAdmin”, incase you’d like to use it later.

go through these steps for Master and Slave.

Other Operating Systems

Linux users (don’t do this on your QNAP) simply type the following in a shell:

sudo apt-get install mysql-server mysql-client

Windows and MacOS X users can download MySQL from the MySQL homepage or setup WAMP Server or MAMP (or a similar distribution).

As of this point forward, I’ll assume a QNAP setup with MySQL and phpMyAdmin installed and active. Everything will be the same for other setups, however the path to my.cnf (the configuration file MySQL) and access to phpMyAdmin, might be different.

Setup the Master MySQL server

Steps:

- Enable the Master as “Master”

- Create a “Slave” user on the “Master”

- Restart the “Master” server

- Make a full SQL dump of the desired databases

– Set a server to Master

We will start by making one of the two MySQL servers the Master. For this we use the server at IP address 192.168.1.100 – replace this IP address with the IP address of your Master MySQL Server in the following examples. To make a server a “Master”, we will need to do some editing in the MySQL configuration file.

This file, my.cnf, can be found at /etc/config/my.cnf (for QNAP anyway). This location depends on your Operating System and type of installation. Certain Linux configurations, for example, have my.cnf store in /etc/mysql/.

Editing files remotely …

If you’re using a NAS, like a QNAP, then you’ll need to use SSH access to edit files.

You can however also use tools like WinSCP (Windows), Filezilla (Windows, MacOS X, Linux) or Forklift (MacOS X) to edit the files without the need to manually type all the commands.

In the my.cnf file, of the Master MySQL Server (/etc/config/my.cnf) we will need to uncomment or add the following:

1

2

3

4

5

| #Master Settings

log-bin=mysql-bin

server-id=100

innodb_flush_log_at_trx_commit=1

sync_binlog=1 |

Line 2 sets the file where “changes” are stored for the Slave to pickup.

Line 3 is something to pay attention to, as it gives the master an ID.

This ID needs to be unique in your Master-Slave network and has to be a number in the 1 to 4294967295 range. In my example I gave it the last number of the IP address, so it’s easier to remember and recognize, but you can pick any number you like.

Lines 4 and 5 are optional, but recommended in the MySQL manual. If I understood things correctly, it will sync and flush transmitted data, so you log file doesn’t become huge.

Additional options …

There are some additional options you might or might not want to use. In my setup I didn’t use either of these.

Bind to IP Address

Some Linux setups appear to like a proper bind statement in the my.cnf file, but I haven’t used it.

1

2

3

4

| # Choose either localhost (bind 127.0.0.1)

# or the IP address of your server, for example:

bind 192.168.1.100 |

Limit the databases that can be replicated

You can limit the databases you’d like to replicate. This is useful when you have a lot of additional databases that you do not wish to replicate. Limiting the databases that can be replicated helps limiting the space needed for the log file used for replication – the “list” of changes that are saved will now be limited to the databases you actually want to replicate.

Select Databases

In the example below, we use “binlog-do-db” to only replicate the database “test”.

You will need to add this line for each database you’d like to specify (see also: MySQL Manual) – a comma separated list will work as well, even though the manual states that you have to add a line for each database.

Exclude Databases

Instead of selecting the desired databases, you can also define what databases to ignore with “binlog_ignore_db” which works the same way. To replicate all databases except “test”:

A common exclusion could be (I actually use this one):

1

| binlog-ignore-db=test,information_schema,mysql |

– Create replication user on the master

For the Slave to get access to the Master databases, a dedicated user should be created with REPLICATION SLAVE rights.

You could add these rights to an existing user of course, but I recommend creating a dedicated user.

The following SQL statements will create this user with the name “slave_user” – execute it in phpMyAdmin, MySQL WorkBench or from the MySQL command line. Don’t forget to change ‘password’ to the password you’d like to use for this new user.

1

2

| GRANT REPLICATION SLAVE ON *.* TO 'slave_user'@'%' IDENTIFIED BY 'password';

FLUSH PRIVILEGES; |

– Restart the Master

After these modifications, you’ll need to restart the MySQL server, which can be done in two ways.

From the command line:

QNAP (via SSH) and certain Linux versions:

/etc/init.d/mysqld.sh restart

Other Linux versions might use:

sudo service mysql restart

Windows:

net stop MySQL || net start MySQL

From the QNAP Web-interface:

Or on a QNAP go to “Control Panel” “Application” “MySQL Server” and remove the checkmark in front of “Enable MySQL Server“, click “Apply“, check the option “Enable MySQL Server” again and click “Apply” again.

You can ask the Master for it’s status by using:

– Make the initial data dump for the slave

After restarting the server, the replication log file should start. However, to get started with the initial data on the Slave, we will need to make a dump of the current data first.

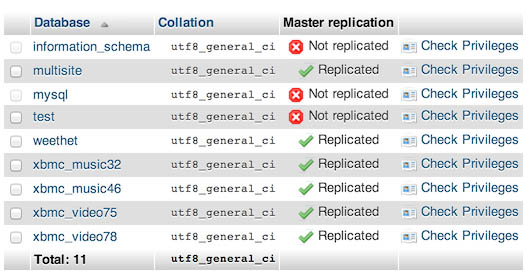

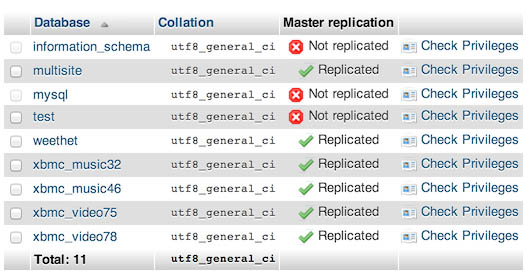

Note : When opening phpMyAdmin and viewing the available databases (click the “databases” tab), you should see right away which ones are replicated and which ones are not replicated. In the screenshot below I used binlog-ignore-db=test,information_schema,mysql . See how everything is marked as “ Replicated” except “information-schema”, “mysql” and “test”?

MySQL – Databases that can be replicated

To play catch up, we need to dump the database to a SQL file so we can import the initial “status” into our Slave MySQL Server.

We have 3 options: phpMyAdmin, MySQL Workbench and MySQL Command-line.

Read the MySQL Export and Import Data article for how to make an initial dump with any of these 3 tools.

Setup the Slave MySQL Server

Steps:

- Initial import of data

- Prepare my.cnf for the Slave

- Start the Slave

- Fixing initial Replication errors on the Slave

– Initial Slave import

The first thing we do is importing the export we just did from the Master into the Slave database. This way we have our “base” data complete for when replication starts.

Again: read the MySQL Export and Import Data article for how to make an initial import.

After your initial import, please verify that everything indeed did get replicated – I usually cherry pick a few tables and compare them with the tables of the Master.

– Prepare in the Slave my.cnf

As sees with the Master Server, we will need to edit the my.cnf file. The following lines need to be added or uncommented in the my.cnf file:

1

2

3

4

5

6

7

8

| #Master - Settings added for replication

log-bin=mysql-bin

relay-log=mysql-relay-bin.log

server-id=200

binlog-ignore-db=test,information_schema,mysql

master-host=192.168.1.100

master-user=slave_user

master-password=password |

Don’t forget to change the lines 4-8 to match your desired server ID (not the same as the Master server-id!), databases to replicate or ignore, Master IP address, username and password.

After saving the changes, restart MySQL (see the Master description above).

Optional:

The following SQL statement, execute in phpMyAdmin, MySQL WorkBench or MySQL command-line, basically does the same as the last 3 lines in the previous code, in case you’d rather not have this info in a readable format in your my.cnf file.

1

| CHANGE MASTER TO MASTER_HOST='192.168.1.100',MASTER_USER='slave_user', MASTER_PASSWORD='password'; |

– Starting and Monitoring the Slave

Now that we have all settings ready, we can start the slave with the following SQL statement:

Note however that in case something goes wrong, “START SLAVE” will not notify you about this.

To view the status of the Slave replication use the following SQL statement:

The latter is a great tool to see if there are any issues with replication. Check the columns “Last_Error” and “Last_SQL_Error” …

Tip: Checking Status with phpMyAdmin

For those that have phpMyAdmin available for Master and/or Slave: explore the “Replication” tab.

If you’ve done all previous settings, then you’ll find there easy accessible links to see either Master or Slave Replication Status in a more readable format (command-line users can use “SHOW SLAVE STATUS\G”, but this does not work in phpMyAdmin).

– Initial Replication Errors

One of the “issues” I ran into, with the initial start of the Slave, was that the Slave reported “Duplicate Key” errors – some of the records in the dump were also recorded in the bin-log file of the Master and caused conflicts. This will STOP the Slave …

Some guides I’ve read suggest using the Master POSITION, but I’ve found out the hard way, that when trying to replicate an actively used database, even this option will not prevent these kind of errors.

To get beyond these kind of messages, you can skip parts of the logged SQL statements.

When looking at the “SHOW SLAVE STATUS”, you will see some “LOG_POS” fields, which help you determine at what position things went wrong. With a SKIP statement, we can skip these, in my situation I had to skip quite a few of these errors (my bad – trying to write an article caused a pretty big gap).

1

2

| SET GLOBAL SQL_SLAVE_SKIP_COUNTER = 1;

SLAVE START; |

The example above skips one error, and then continues. If you have more than one error, you can choose a higher number than 1 – just make sure not to skip any vital changes.

Once you’ve gone through all and “LAST_ERROR” and “LAST_SQL_ERROR” do not show a message, you’re up and running …

If however, you picked a rather large number, you might want to reset the SKIP_COUNTER to zero so that future errors will not be skipped:

1

2

3

| STOP SLAVE;

SET GLOBAL SQL_SLAVE_SKIP_COUNTER = 0;

SLAVE START; |

Comments

There are 18 comments. You can read them below.

You can post your own comments by using the form below, or reply to existing comments by using the "Reply" button.

Awesome – I have a similar setup (2x QNAP) and this was exactly what I was looking for to make a full backup work properly.

Weird that QNAP didn’t include something like this for replication isn’t it?

John

Thanks John …! Again!

I guess it was too complicated to implement for QNAP? All they have to do is replicate the MySQL data folder …

hans

This really useful. I have been struggling to implement Master-Slave Replication for a while, but coming across this post has got me out of the the pit!!!

But, this has so far worked only on the LAN setup.

Tomorrow I want to take it life to WAN setup.

Seboka

Hi Seboka!

Glad to hear that this article helped you on your way … thanks for posting it here, it’s very much appreciated …

Tip:

When replicating over WAN:

– Don’t forget to map the proper ports in your router (3306 usually), if your MySQL master runs at home.

– If your master runs on a webserver, then you might need to allow such “remote” direct access as well (different per setup how this is done – usually it involves a firewall setting and/or user access rights on the database itself).

hans

Thank you very much for this GREAT article! I have 3 Qnap, and now can synchronize my DBs. That’s great!

I installed kimai and now I have an online backup of my data. That’s great!

Holger

Hi Holger!

Great to hear that you’re enjoying the article and syncing your MySQL databases. I had not heard of Kimai before – so for those interested: this appears an Open Source Time Tracking tool. We learn something new every day …

…

Thanks again Holger for taking the effort to leave your positive feedback – it’s very much appreciated!

hans

Thanks for the article. I have implemented it successfully. However, I was just wondering if it is possible to also have autobackupmysql running on MASTER and SLAVE?

Thanks again.

Rose

Hi Rose,

Glad to hear this works for you as well!

I had to Google autobackupmyslq, and since they say it uses mysldump, I think it will work.

You might want to double check with the creator ,…

hans

Hi,

Great tutorial.

I am having one problem, after changing my,cnf and restart on the slave I get error when trying to login to phpmyadmin

#2002 – no such file or directory it says on the phpmyadmin login page.

Any suggestions?

Best regards

Robin Holum

Hi Robin,

Thank you for the compliment!

As for your error message;

Could it be that MySQL doesn’t even start (due to an error in the my.cnf file)?

hans

Adding these lines to my.cnf is working:

log-bin=mysql-binrelay-log=mysql-relay-bin.logserver-id=200binlog-ignore-db=test,information_schema,mysql

But when i add these lines i get the error when trying to logon as root in phpmyadmin on slave nas:

master-host=192.168.1.35master-user=slave_usermaster-password=password

Have checked that slave_user has correct password.

Robin Holum

thank you very much… i will test this later on a qnap NAS

do you let me use this info on my paperwork my friend?

i was asked to doa guide for my company…

David Sucesso

Hi David,

thank you very much for the thank-you post – it’s much appreciated.

Please, feel free to use this info in your paperwork – thank you for asking.

hans

thank you

i will put source with this website :)

David Sucesso

Awesome!

hans

Nicely written – very clear. Much appreciated.

Quintar Verbum

Thanks Quintar for the compliment and taking the time to post it here – it is very much appreciated!

Hans

Automatic MY SQL Backup to use application If you are using MY SQLDUMP, you should stop duplicating replica before starting the dump process to ensure that the dump contains a consistent set of data: Set the analogy to processing applications. You can stop complete duplication in simulation using My SQL ADMIN.

giftechies